At H2O.ai, we pride ourselves on developing world-class Machine Learning, Deep Learning, and AI platforms. We released H2O, the most widely used open-source distributed and scalable machine learning platform, before XGBoost, TensorFlow and PyTorch existed. H2O.ai is home to over 25 Kaggle grandmasters, including the current #1. In 2017, we used GPUs to create the world’s best AutoML in H2O Driverless AI. We have witnessed first-hand how Large Language Models (LLMs) have taken over the world by storm.

We are proud to announce that we are building h2oGPT, an LLM that not only excels in performance but is also fully open-source and commercially usable, providing a valuable resource for developers, researchers, and organizations worldwide.

In this blog, we’ll explore our journey in building h2oGPT in our effort to further democratize AI.

Why Open-Source LLMs?

While LLMs like OpenAI’s ChatGPT/GPT-4, Anthropic’s Claude, Microsoft’s Bing AI Chat, Google’s Bard, and Cohere are powerful and effective, they have certain limitations compared to open-source LLMs:

- Data Privacy and Security: Using hosted LLMs requires sending data to external servers. This can raise concerns about data privacy, security, and compliance, especially for sensitive information or industries with strict regulations.

- Dependency and Customization: Hosted LLMs often limit the extent of customization and control, as users rely on the service provider’s infrastructure and predefined models. Open-source LLMs allow users to tailor the models to their specific needs, deploy on their own infrastructure, and even modify the underlying code.

- Cost and Scalability: Hosted LLMs usually come with usage fees, which can increase significantly with large-scale applications. Open-source LLMs can be more cost-effective, as users can scale the models on their own infrastructure without incurring additional costs from the service provider.

- Access and Availability: Hosted LLMs may be subject to downtime or limited availability, affecting users’ access to the models. Open-source LLMs can be deployed on-premises or on private clouds, ensuring uninterrupted access and reducing reliance on external providers.

Overall, open-source LLMs offer greater flexibility, control, and cost-effectiveness, while addressing data privacy and security concerns. They foster a competitive landscape in the AI industry and empower users to innovate and customize models to suit their specific needs.

The H2O.ai LLM Ecosystem

Our open-source LLM ecosystem currently includes the following components:

- Code, data, and models: Fully permissive, commercially usable code, curated fine-tuning data, and fine-tuned models ranging from 7 to 20 billion parameters.

- State-of-the-art fine-tuning: We provide code for highly efficient fine-tuning, including targeted data preparation, prompt engineering, and computational optimizations to fine-tune LLMs with up to 20 billion parameters (even larger models expected soon) in hours on commodity hardware or enterprise servers. Techniques like low-rank approximations (LoRA) and data compression allow computational savings of several orders of magnitude.

- Chatbot: We provide code to run a multi-tenant chatbot on GPU servers, with an easily shareable end-point and a Python client API, allowing you to evaluate and compare the performance of fine-tuned LLMs.

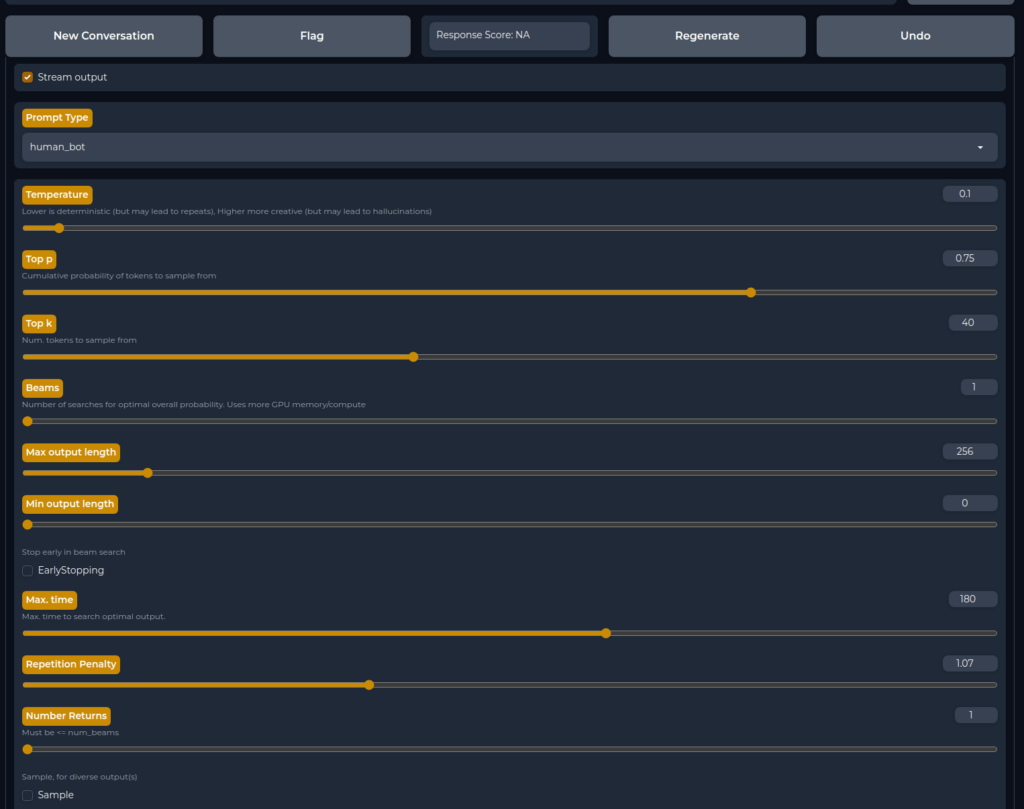

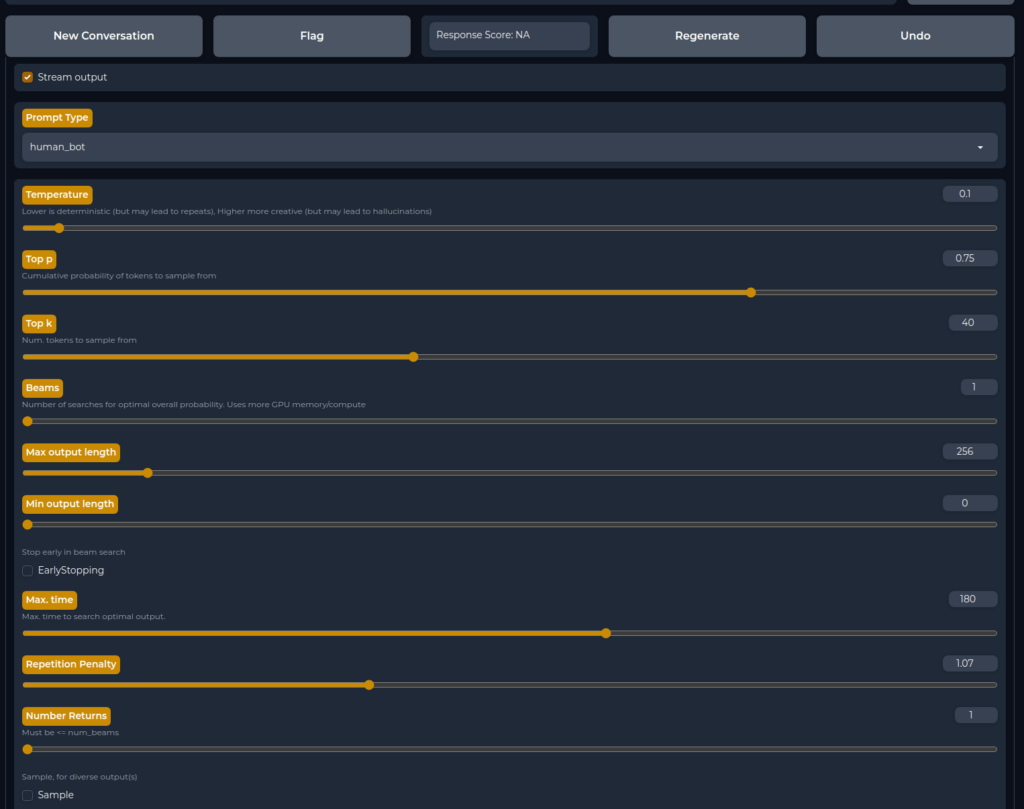

- H2O LLM Studio: Our no-code LLM fine-tuning framework created by the world’s top Kaggle grandmasters makes it even easier to fine-tune and evaluate LLMs.

Everything we release is based on fully permissive data and models, with all code open-sourced, enabling broader access for businesses and commercial products without legal concerns, thus expanding access to cutting-edge AI while adhering to licensing requirements.

Roadmap and Future Plans

We have an ambitious roadmap for our LLM ecosystem, including:

- Integration with downstream applications and low/no-code platforms (H2O Document AI, H2O LLM Studio, etc.)

- Improved validation and benchmarking frameworks of LLMs

- Complementing our chatbot with search and other APIs (LangChain, etc.)

- Contribute to large-scale data cleaning efforts (Open Assistant, Stability AI, RedPajama, etc.)

- High-performance distributed training of larger models on trillion tokens

- High-performance scalable on-premises hosting for high-throughput endpoints

- Improvements in code completion, reasoning, mathematics, factual correctness, hallucinations, and reducing repetitions

Getting Started with H2O.ai’s LLMs

You can Chat with h2oGPT right now!

To start using our LLM as a developer, follow the steps below:

- Clone the repository:

git clone https://github.com/h2oai/h2ogpt.git - Change to the repository directory:

cd h2ogpt - Install the requirements:

pip install -r requirements.txt - Run the chatbot:

python generate.py --base_model=h2oai/h2ogpt-oig-oasst1-256-6.9b - Open your browser at

http://0.0.0.0:7860or the public live URL printed by the server.

For more information, visit h2oGPT GitHub page , H2O.ai’s Hugging Face page and H2O LLM Studio GitHub page .

Join us on this exciting journey as we continue to improve and expand the capabilities of our open-source LLM ecosystem!

Acknowledgements

We appreciate the work by many open-source contributors, especially: