Note : this is a community blog post by Shamil Dilshan Prematunga . It was first published on Medium .

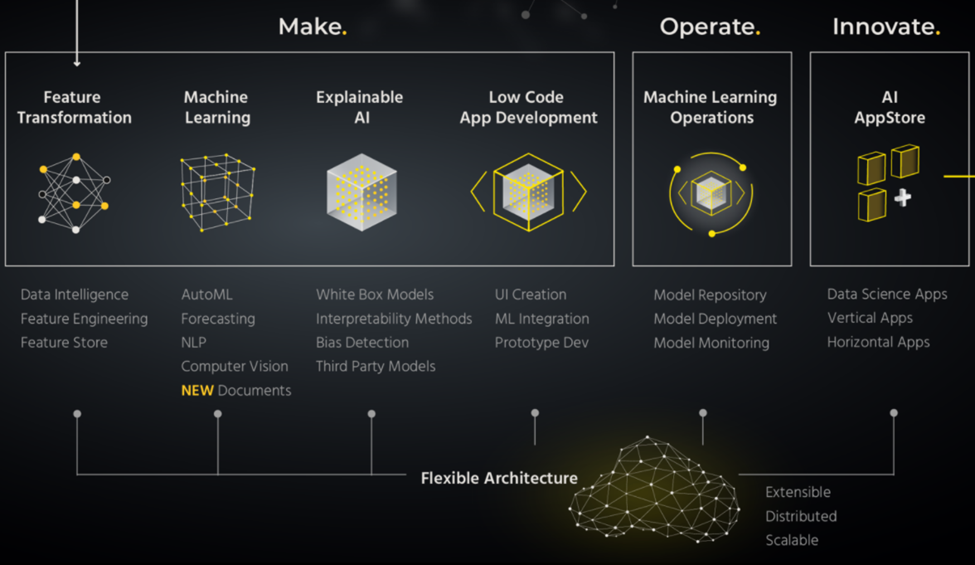

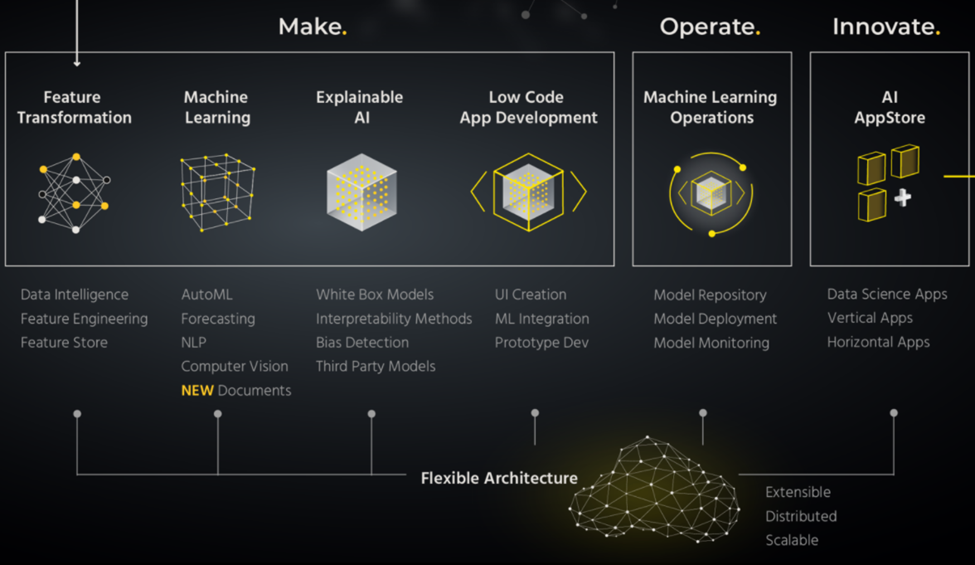

When we step into the AI application world it is not one easy step. It has a series of tasks that are combined. To convert an idea to the workable stage we must fulfill the requirements in each stage. When we look at existing platforms, there are leading solutions in the industry to fulfill each stage of the pipeline. The speciality of the H2O platform in my view is that it has the solution to each step in the pipeline.

- Make – Build models and applications with accuracy. (Driverless AI and H2O Wave)

- Operate – Machine Learning Operations to monitor and rapidly adapt to changing conditions. (H2O MLOps)

- Innovate – Deliver innovative solutions to the end-user. (H2O AI App Store)

Figure 1: H2O End-to-End Process

Here I am going to focus on the “Operate” section which mainly works with MLOps.

What is MLOps?

MLOps is the short-term of Machine Learning Operations. It is kind of a process that we can define as a seamless handoff between the Data Scientist and ML Engineer or the developer who takes the models to production. When we figure out the big picture of MLOps we can identify the three main goals:

- Fast experimentation and model development.

- Fast deployment of updated models into production.

- Quality assurance.

Main principles in MLOps

There are five general principles in MLOps:

- Automation — Automate the workflow steps without any manual intervention.

- Versioning — Track ML models and data sets with version control systems.

- Experiment Tracking — Multiple experiments on model training are parallelly executed.

- Monitoring — Monitor to assure that the ML model performs as expected.

- Testing — Tests for features and data, model development, and infrastructure.

How does it differ from DevOps?

The application of DevOps principles and practices to the machine learning workflow can be identified as MLOps. In addition to the usual CI/CD practices in DevOps, there is an additional stage in the MLOps pipeline called retraining. Since MLOps is mainly related to machine learning projects, the development life cycle is a bit different from other software developments.

In DevOps, software engineers develop the code itself while DevOps engineers are focused on deployment and creating a CI/CD pipeline. When it comes to MLOPs, data scientists play the role of software engineers as they write codes to build models while MLOPs Engineers are responsible for the deployment and monitoring of these models in production.

Why H2O MLOps?

In H2O MLOps, I can find three main sections: Model Repository , Model Deployment , and Model Monitoring . The cool nature of their platform is the flexible architecture that supports ML operations at the production level. We can find out three main pillars to describe the flexibility of the platform:

- Extensible — H2O AI Cloud platform has clients for python, R, and Java which benefits users with the latest versions of open-source packages. This is helpful for users to train, deploy, and customize both H2O.ai as well as third-party models.

- Distributed — Due to the Kubernetes-based deployment approach in the platform, resource allocations are handled automatically. This is a great advantage as we can deploy and handle any data size and model training that occurs across multiple CPUs and GPUs.

- Scalable — Using existing high-performance NVIDIA GPUs and CUDA runtime to cater to demanding requirements. This is another great feature that provides high-performance service to the user.

We can now dig into the main sections covered in H2O MLOps:

- Model Repository – This is a kind of management section in H2O MLOps. This means their platform acts as a central place in the organization which can host and manage all experiments and their associated artifacts. Here we can register our experiments as models and maintain transparently with versioning. Other than models from Driverless AI, we can also use third-party models in MLOps.

- Model Deployment – Here we can build a model and then deploy it in different ways. Common deployment modes are known as multi-variant (A/B) mode and champion/challenger mode. For example, we can easily swap the current champion model in production with other challenger models based on performance.

- Model Monitoring – This feature helps maintain oversight over models in production. In real-world ML projects, the model performance may drift with time. This is due to changes in data and concept. In addition, H2O MLOps provides model feature importance results, experiment leaderboard as well as alerts and notifications.

The practicality of an end-to-end solution

Now, let me walk you through the end-to-end process with H2O MLOps. It is quite easy and user-friendly to work with their amazing and simple UI.

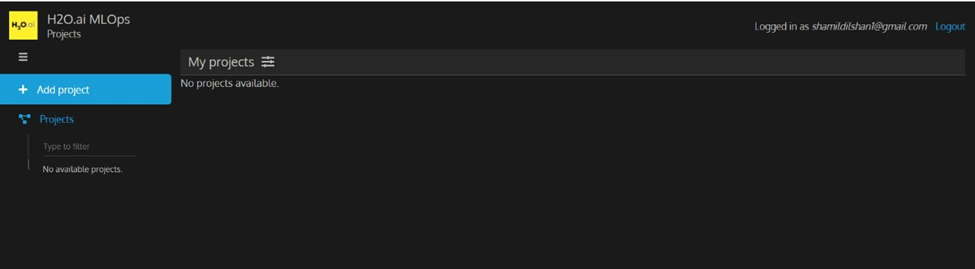

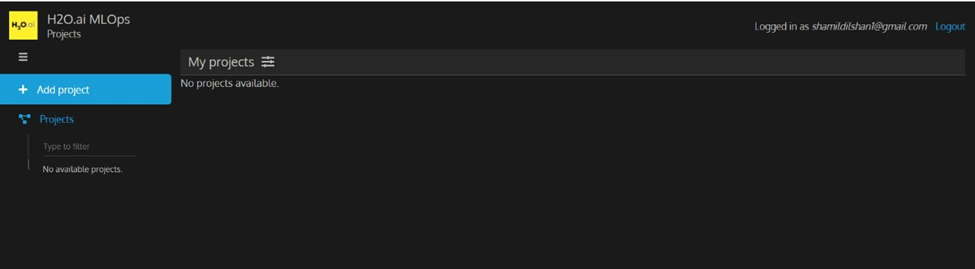

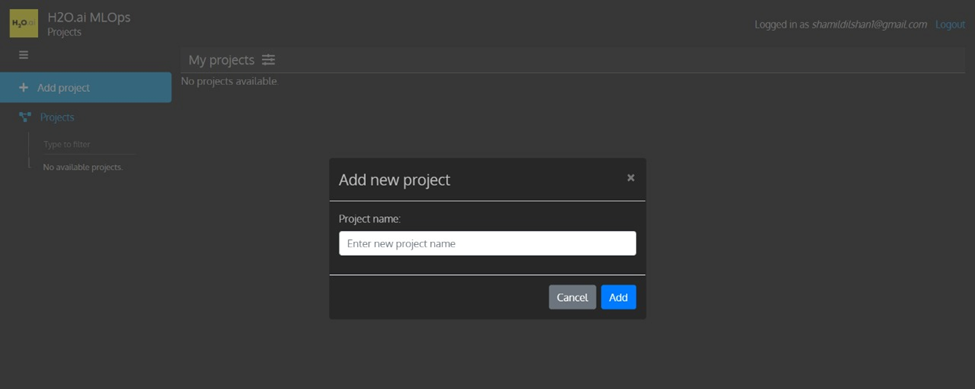

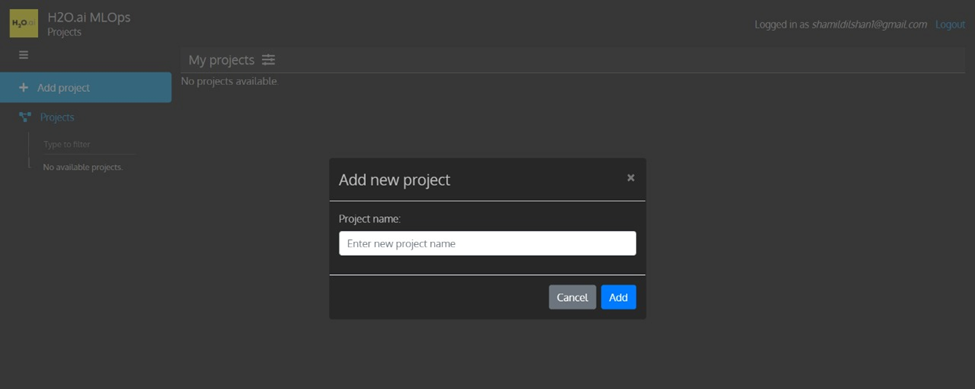

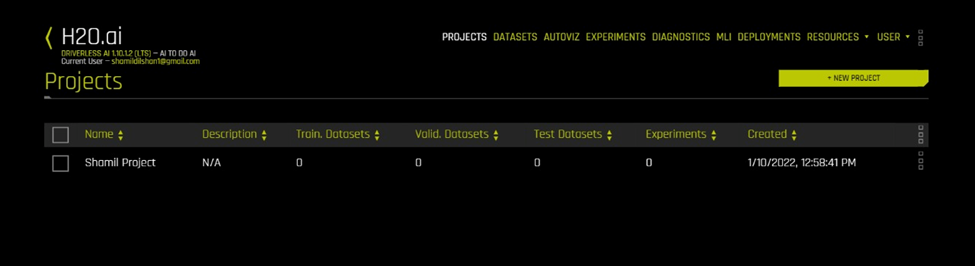

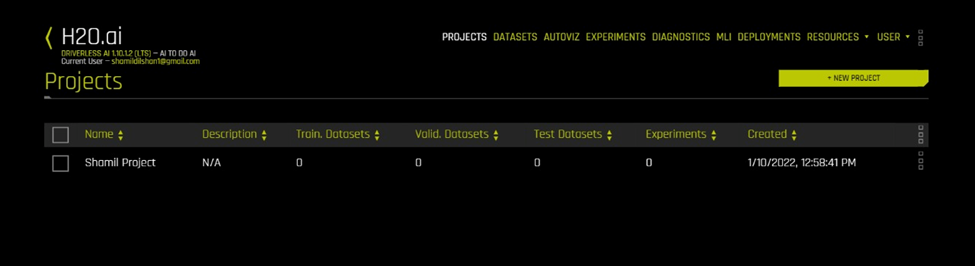

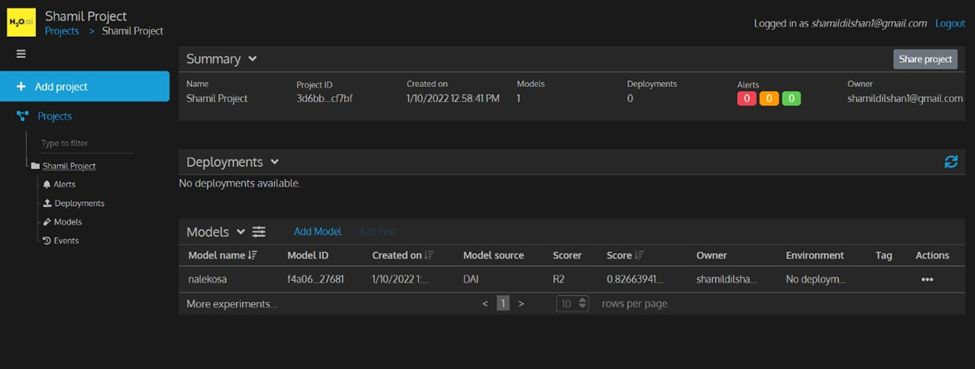

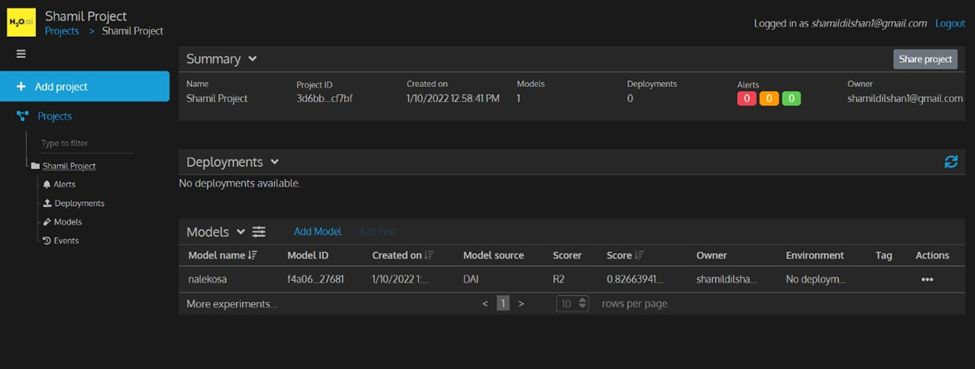

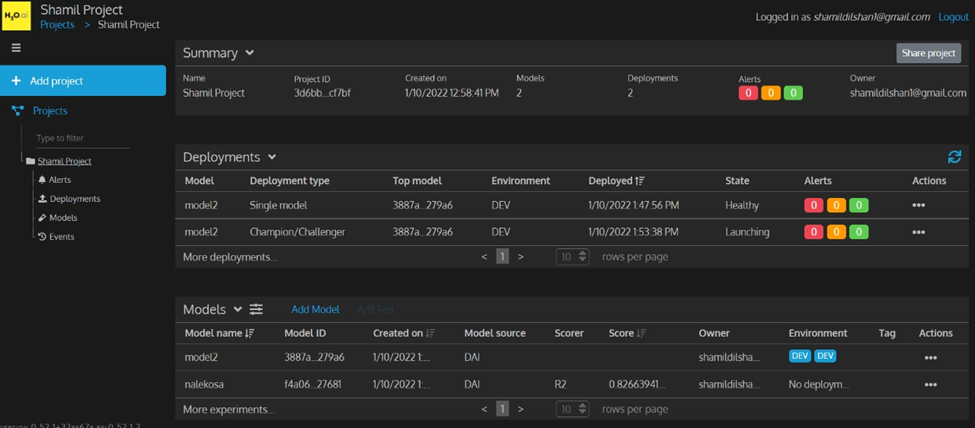

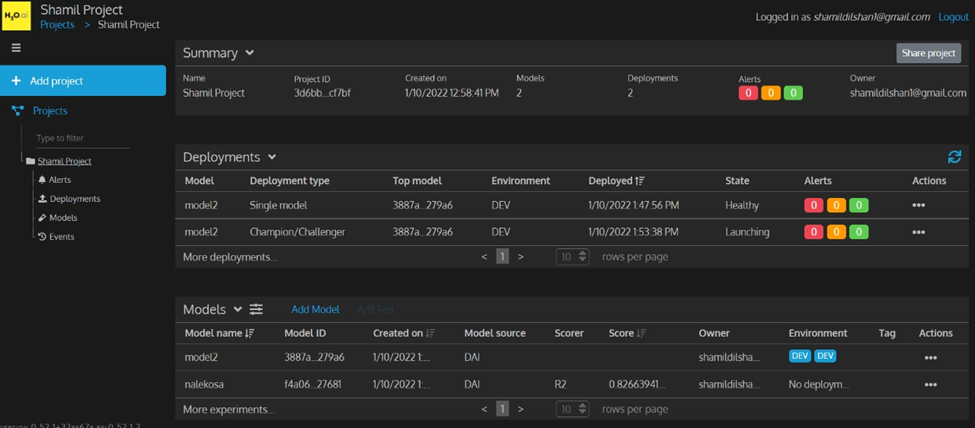

- Create a project – Once logged in to the H2O MLOps you can see an interface as below and you can create your project.

Figure 2: H2O MLOps Platform

Figure 3: Create New Project

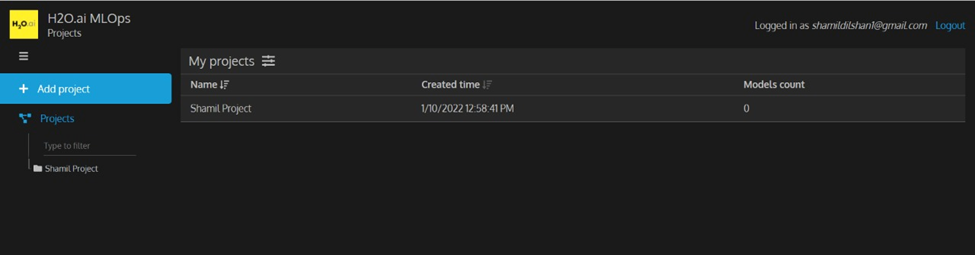

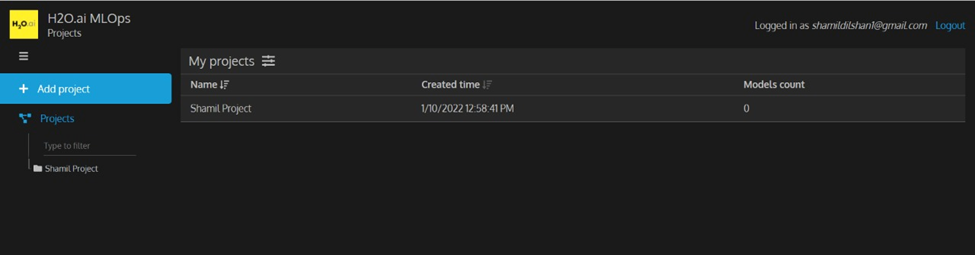

Figure 4: After Creating New Project

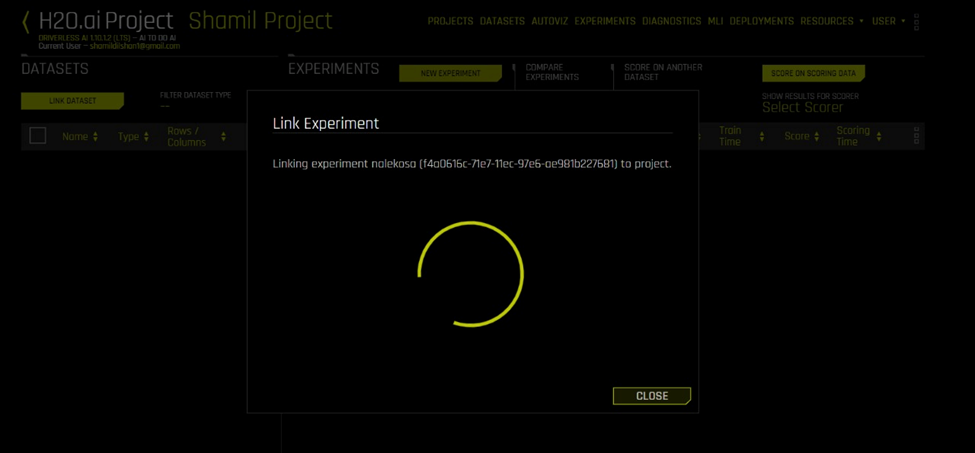

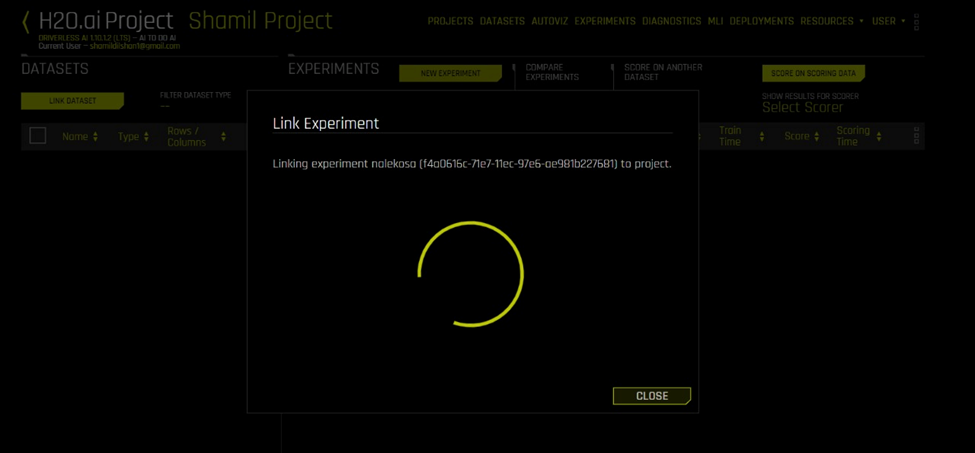

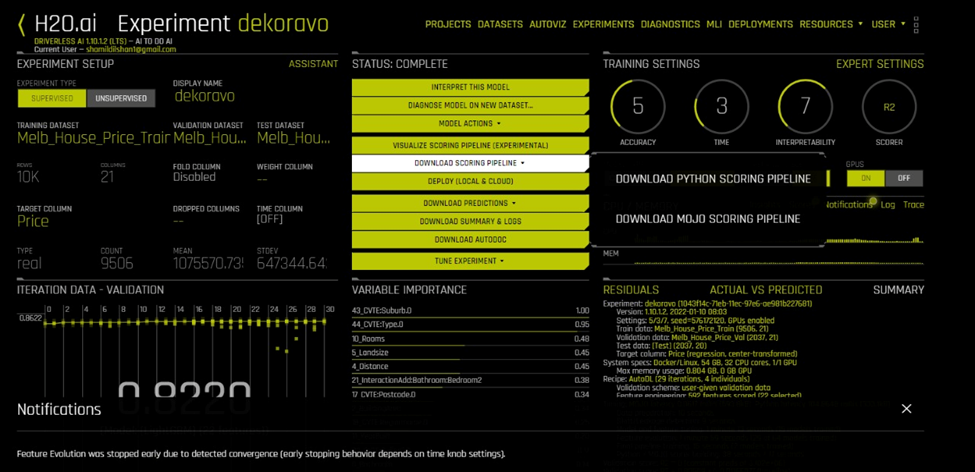

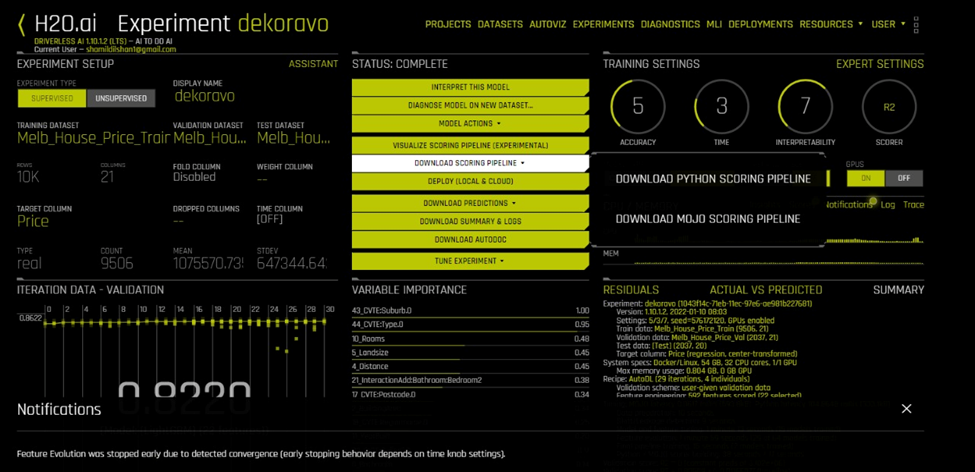

2. In Driverless AI, we can see the project we just created and we can link any Driverless AI experiments to that project. Here I ran an experiment related to the Melbourne House Price data set and linked the experiment to MLOps. We can see our model is added to the MLOps platform.

Figure 5: Driverless AI Updated Project List

Figure 6: Create Experiment in DAI

Figure 7: Inside view of the Empty Project

Figure 8: Link Experiment to the Project

Figure 9: MLOps Platform Updated with Linked Experiment

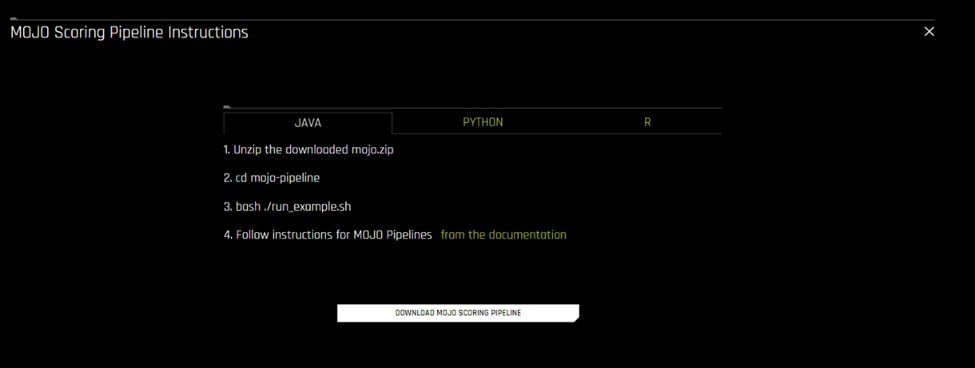

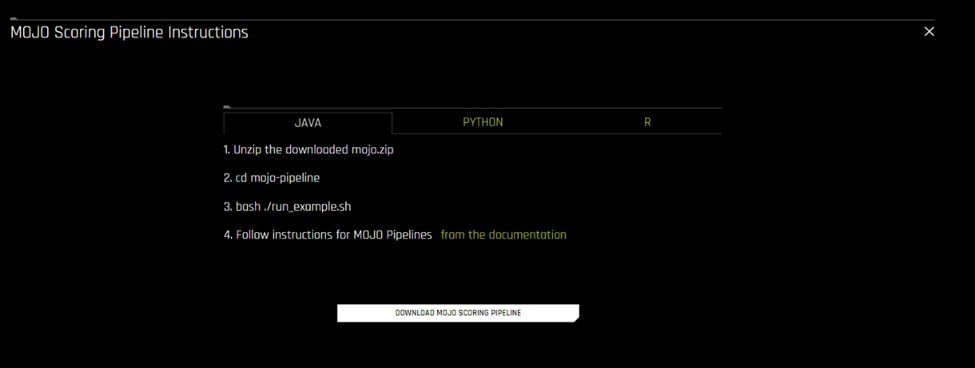

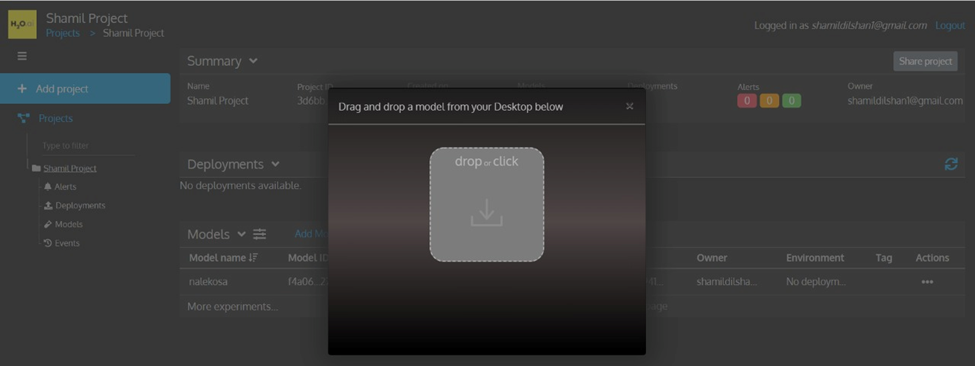

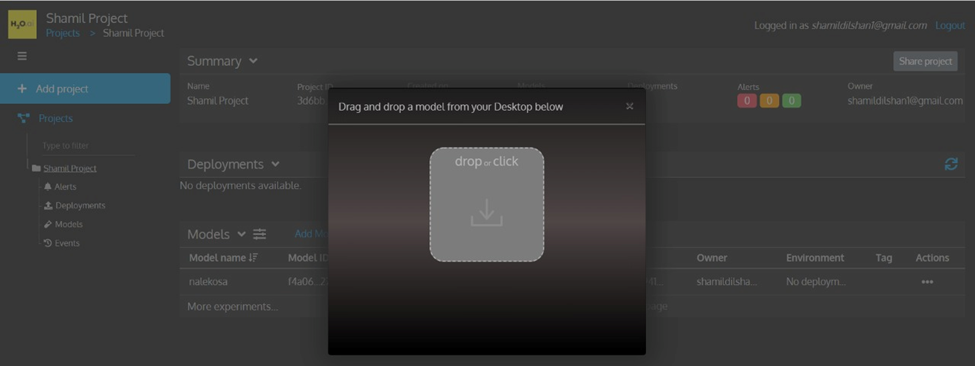

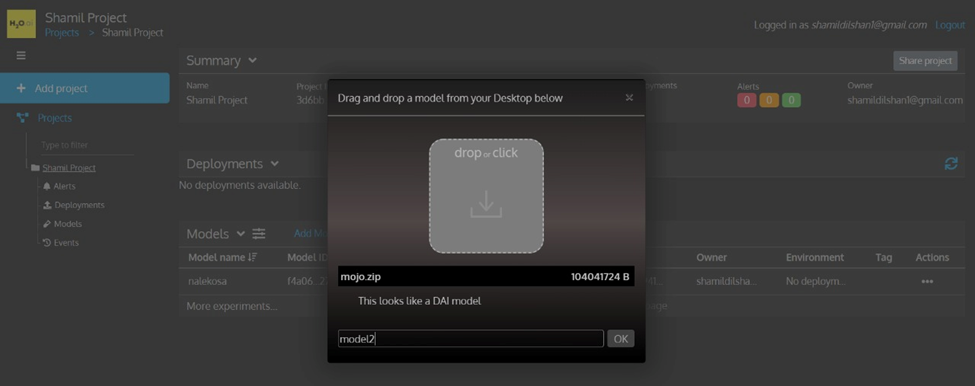

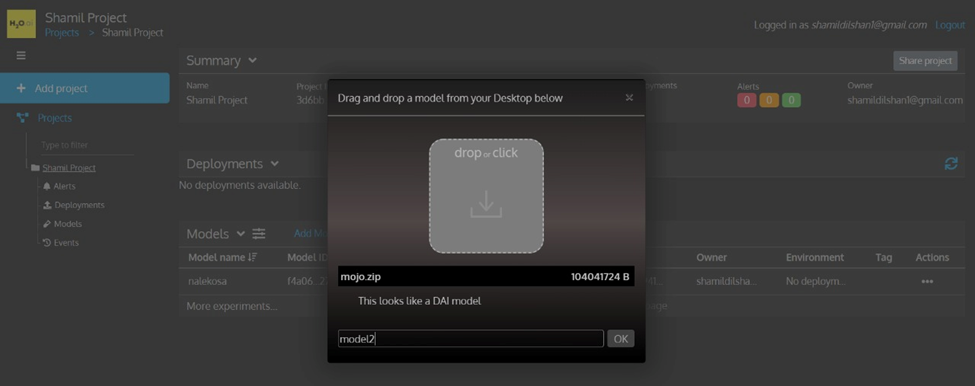

3. Other than linking the Driverless AI model to MLOps directly, we can also import our models manually to the MLOps platform. For example, I ran another experiment using the same dataset and downloaded the MOJO scoring pipeline. Then I imported the pipeline (mojo.zip) to the MLOps platform.

Figure 10: Download MOJO Pipeline from DAI Experiment

Figure 11: MOJO Download Page

Figure 12: Add Model Interface in MLOps Platform

Figure 13: Drag and Drop Downloaded MOJO from DAI

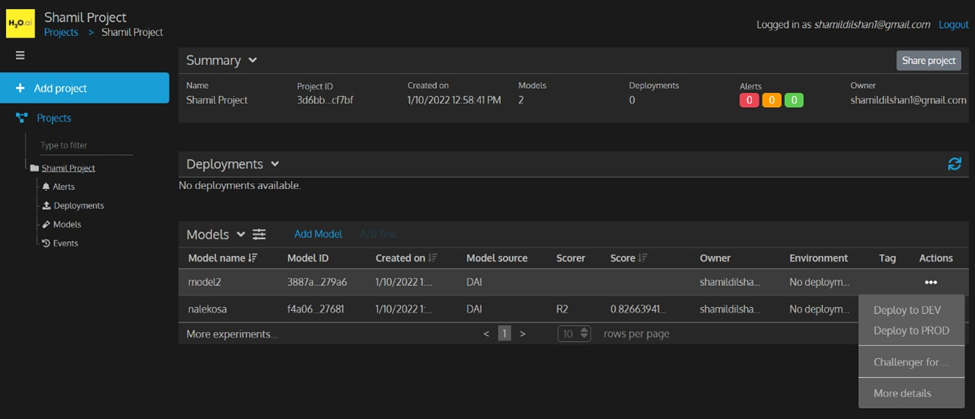

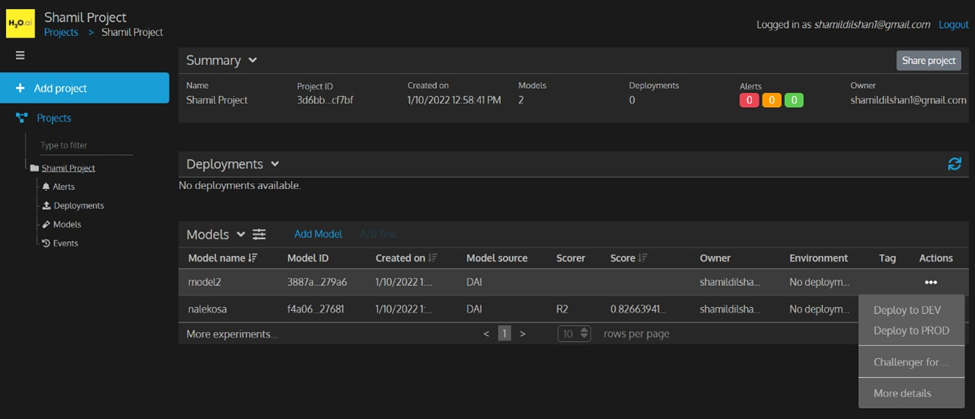

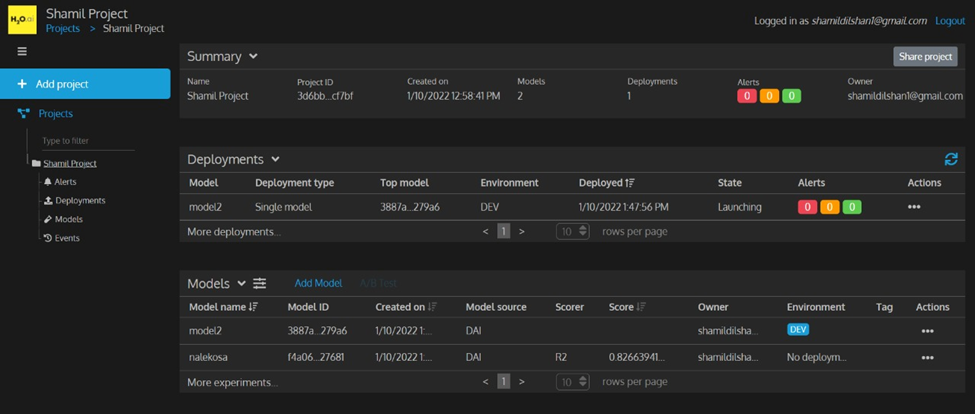

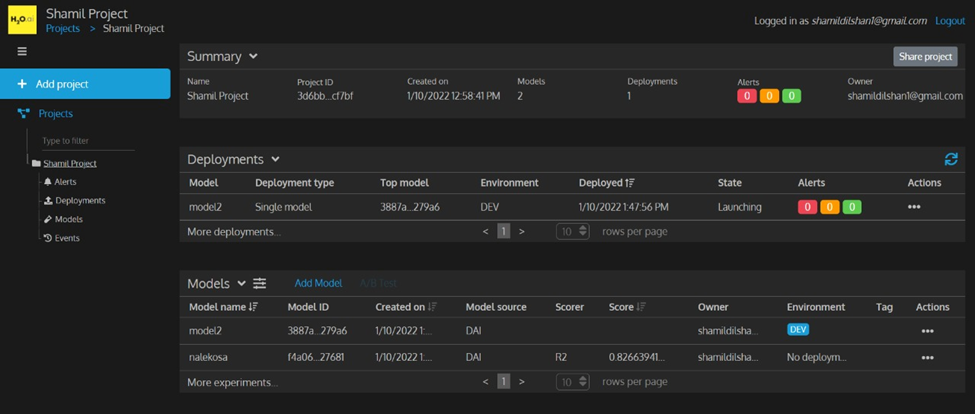

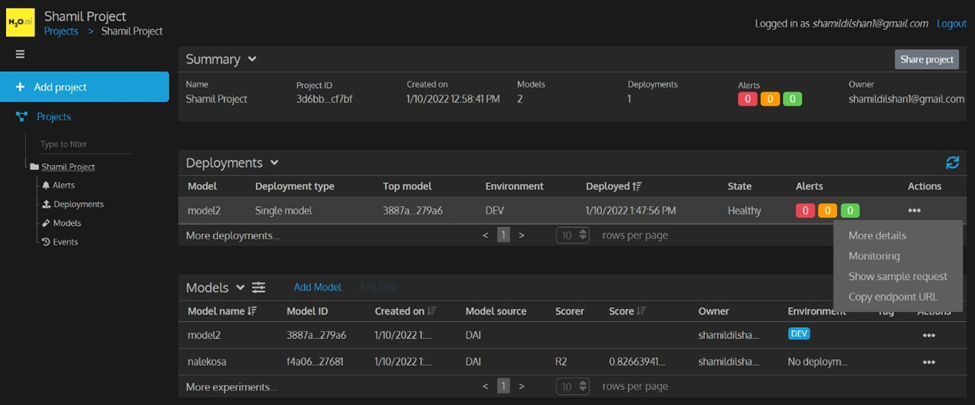

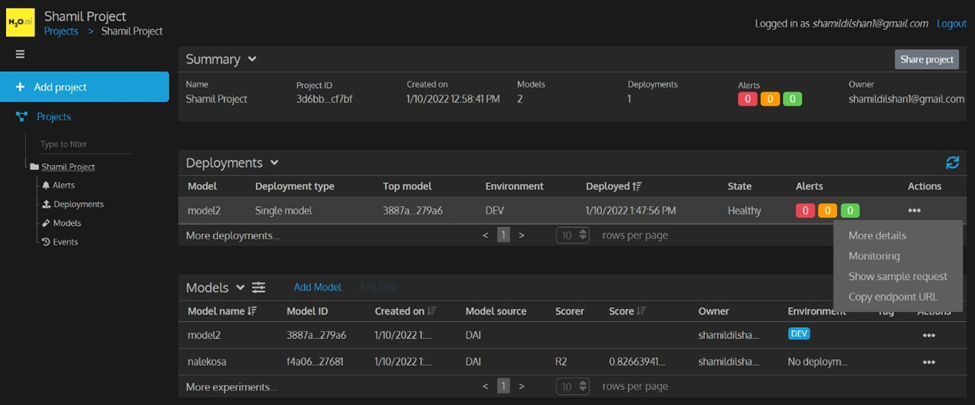

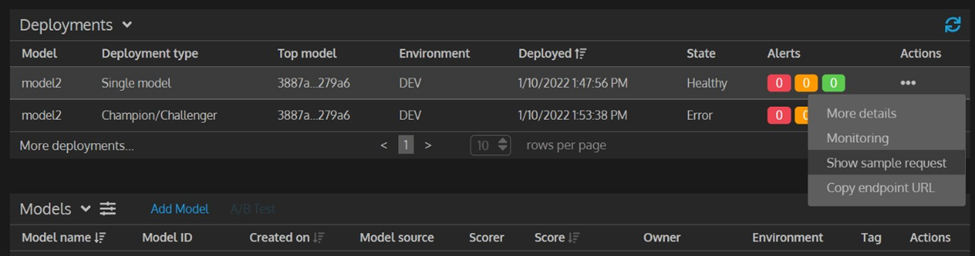

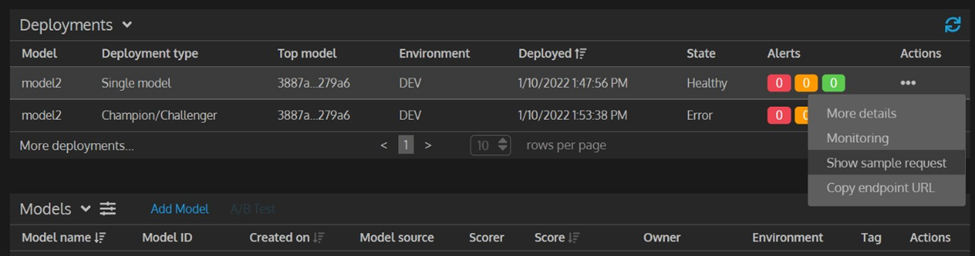

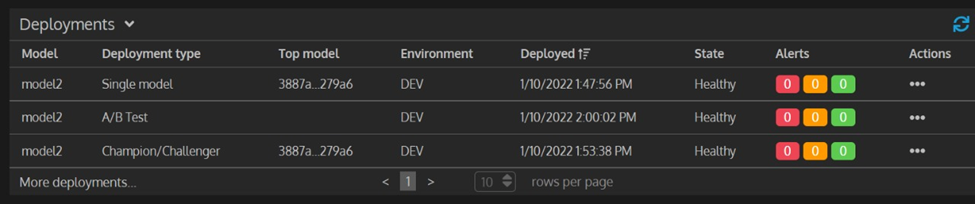

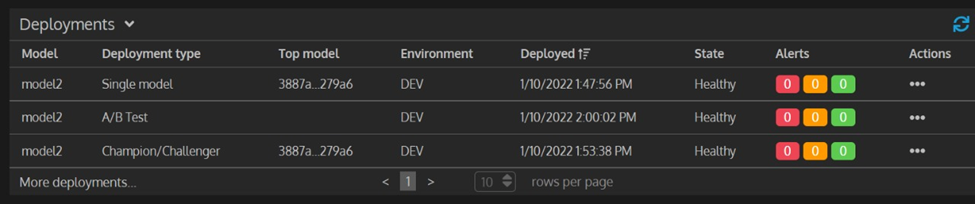

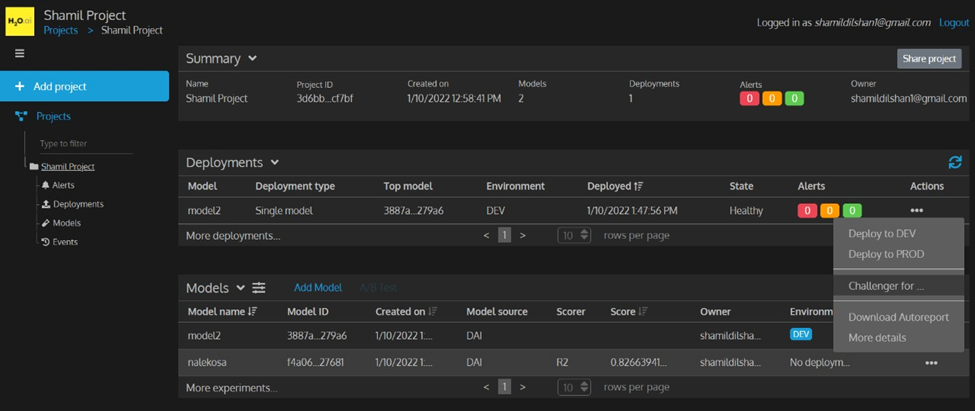

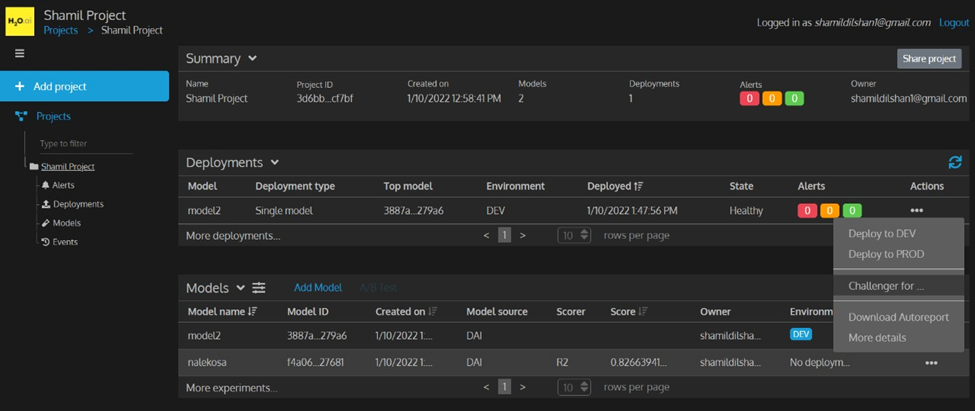

4. We can deploy our imported model in the Dev (Development) platform or Prod (Production) platform. Initially, I tested the single model deployment which is the simplest one out of three. After the deployment, we can see it in the deployments section with some useful data. In the action bar, we have a lot more options as shown below.

Figure 14: Model Action Bar

Figure 15: After Deploying Model

Figure 16: Action Bar in Deployments

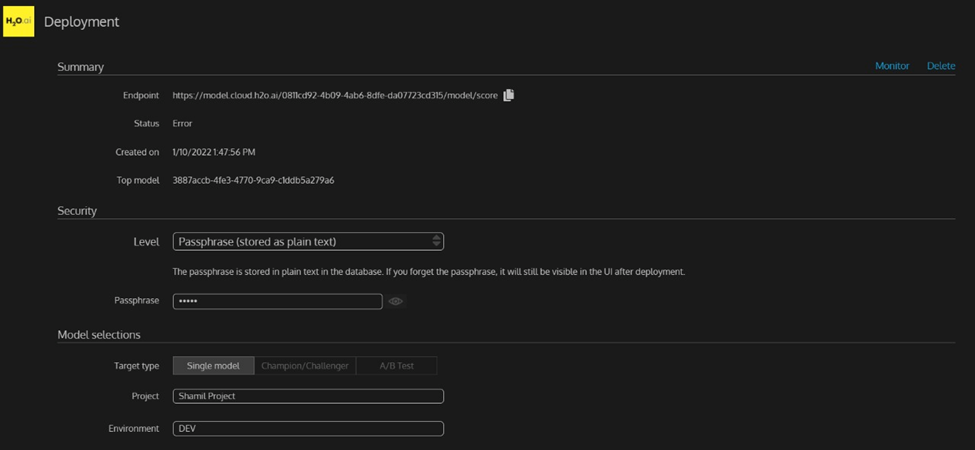

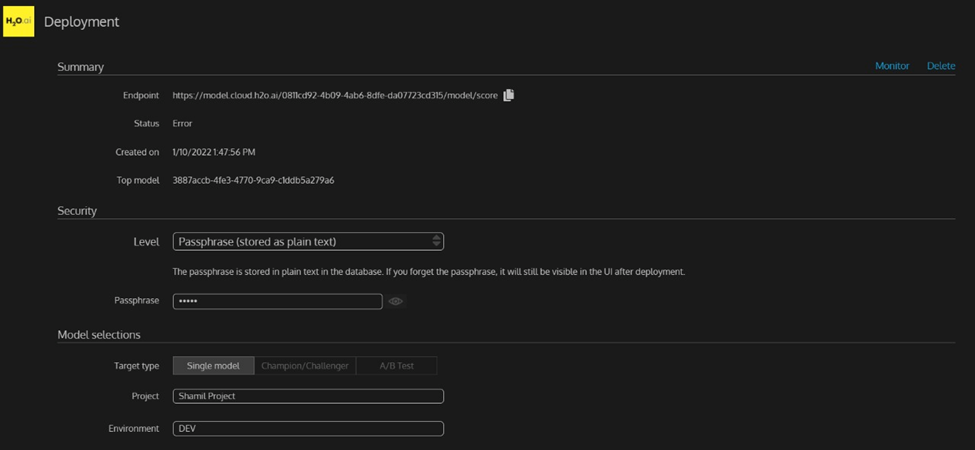

Figure 17: More Details about the Deployment

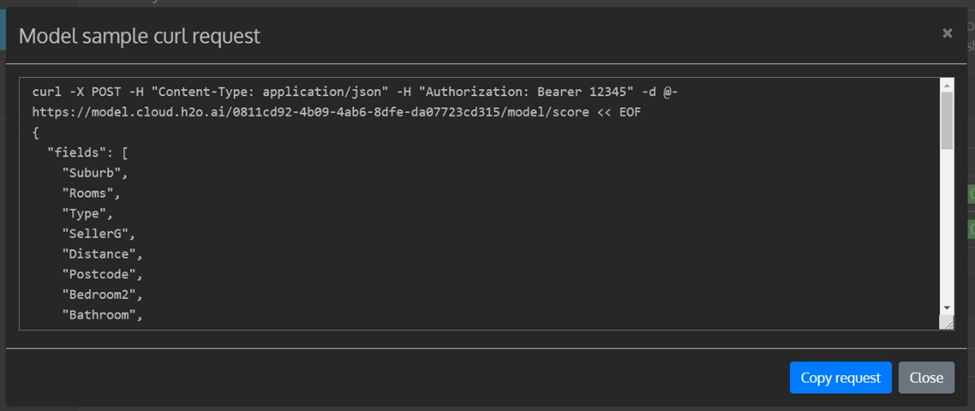

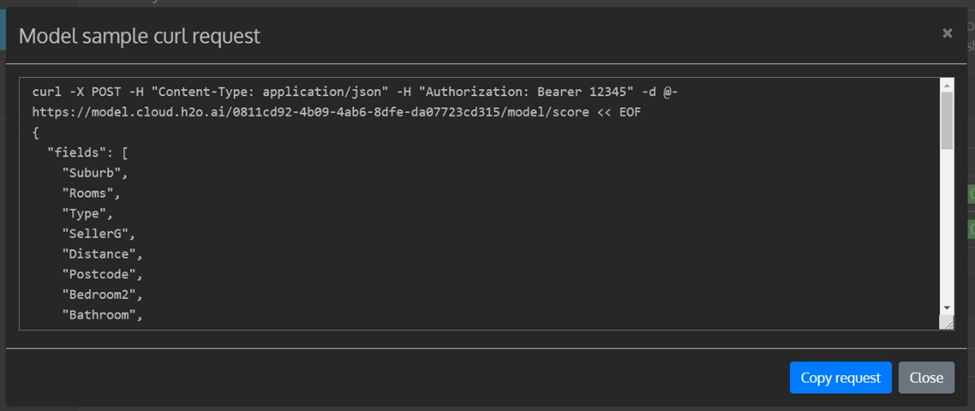

Figure 18: “Show Sample Request” Action

Figure 19: Sample Request Output

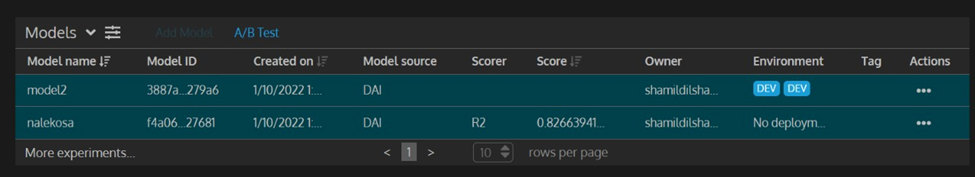

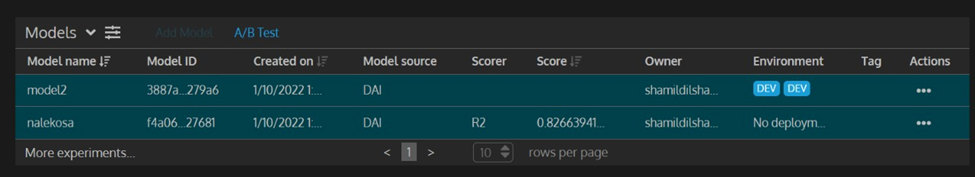

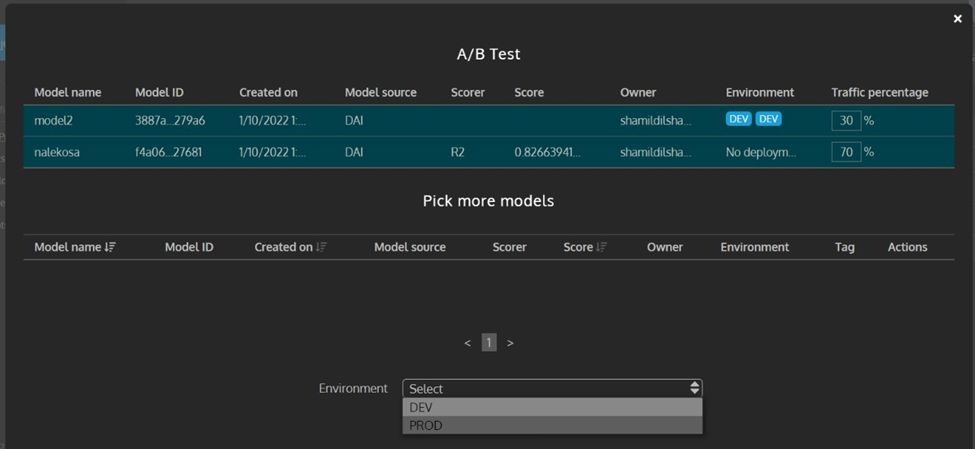

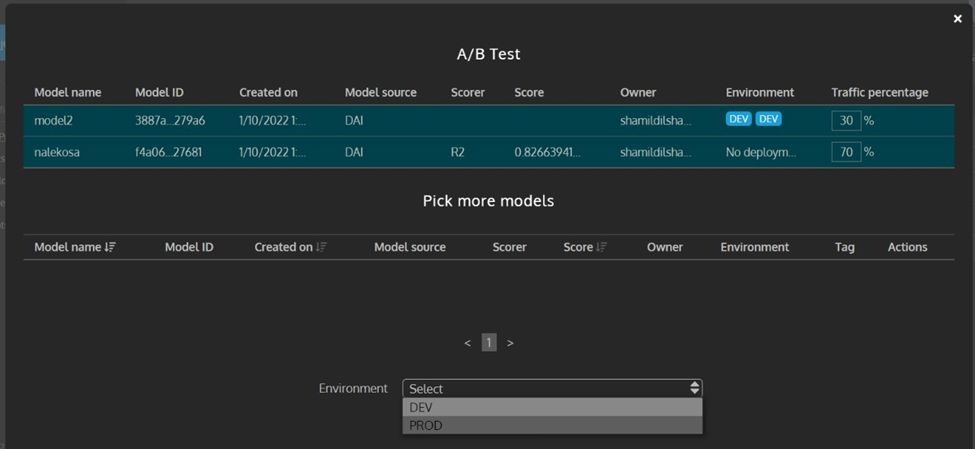

5. For the next deployment method, I tried out the “A/B Test” deployment. Here we have to select two or more models and click the “A/B Test”. This will compare the performance of selected models. We can also adjust the percentage of traffic for each model.

Figure 20: Select Multiple Models to the A/B Deployment

Figure 21: Add Traffic Percentages

Figure 22: View After the Deployment

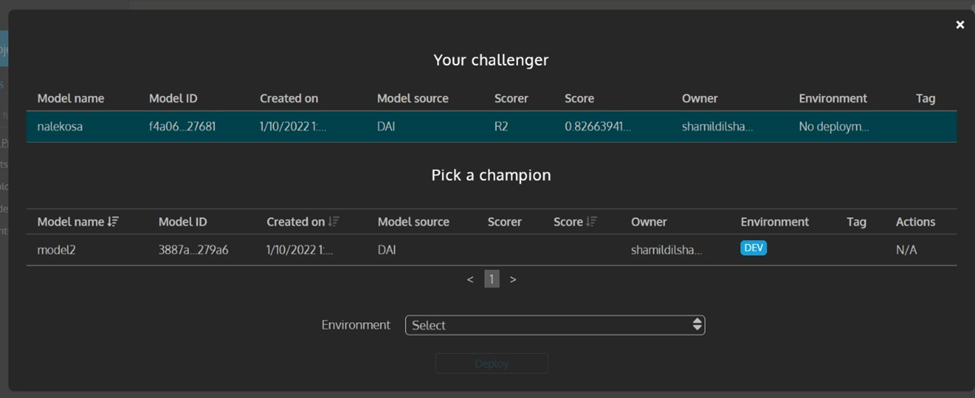

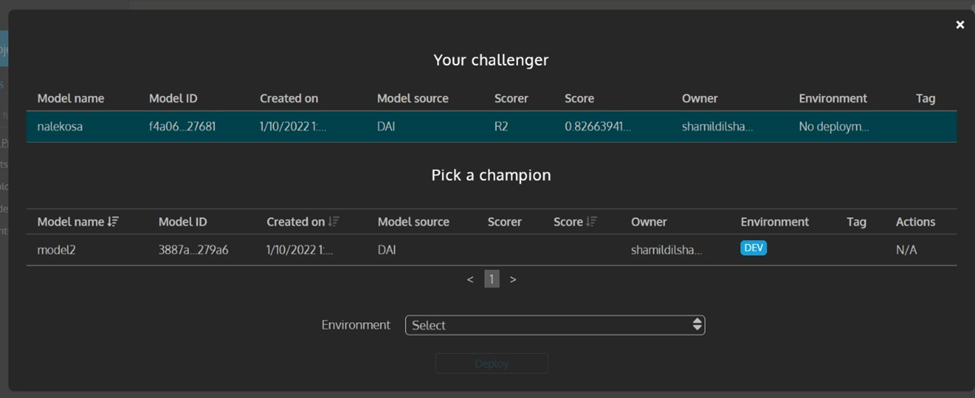

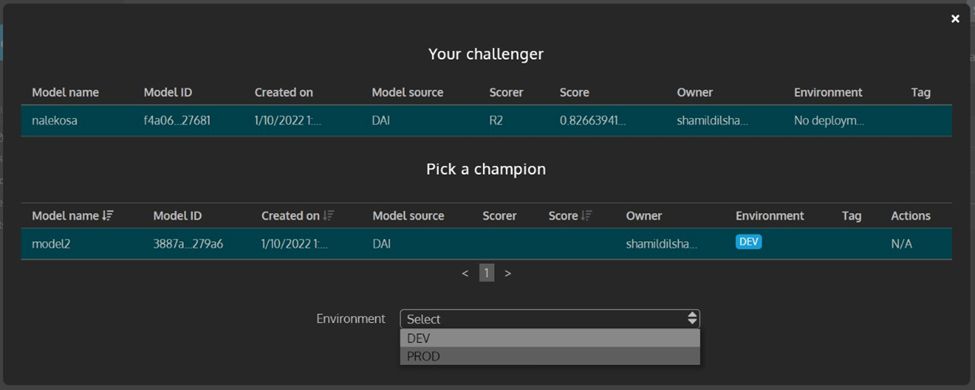

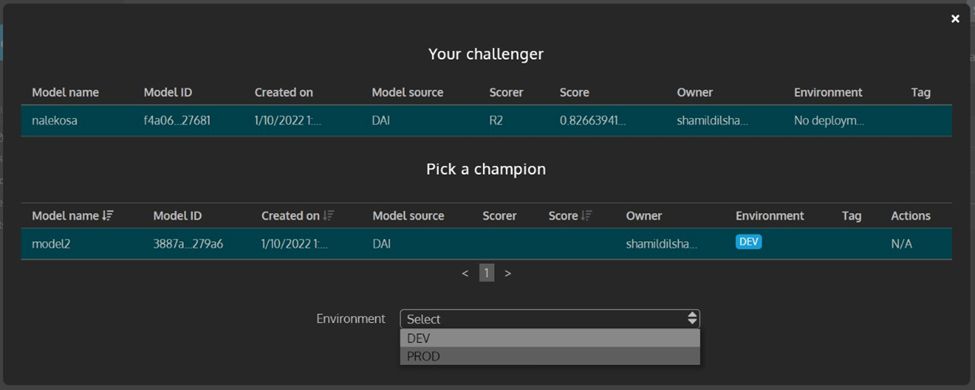

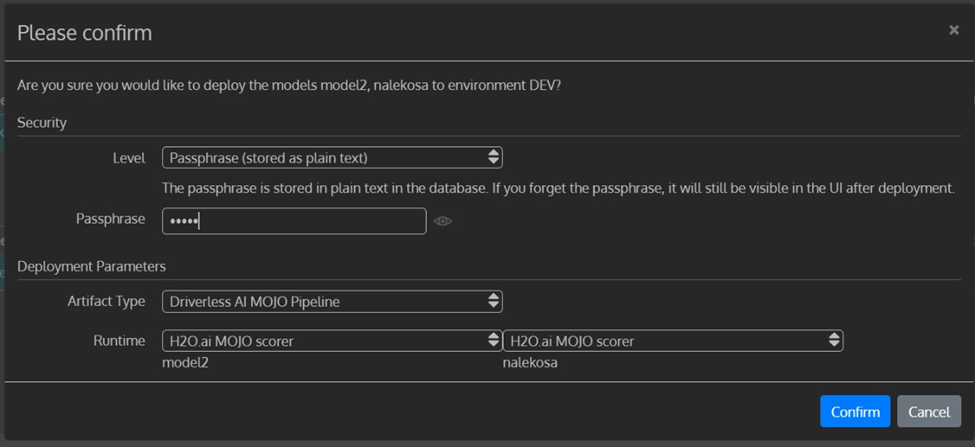

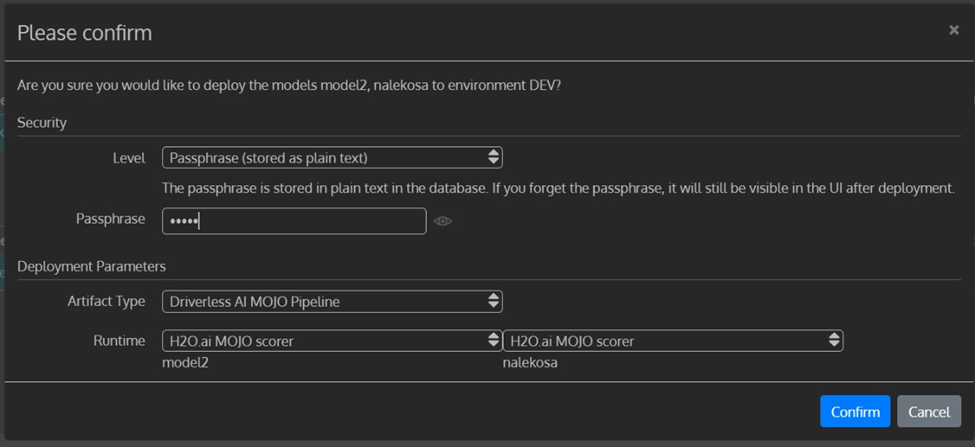

6. For the third and final deployment method, I tested the “Champion/Challenger” deployment. Here MLOps allows us to compare our preferred model (Champion) to a challenger model continuously. We have to select the action bar in front of the challenger model and fill the popup menu and deploy it as below.

Figure 23: Challenger Selection Action

Figure 24: Champion Model Selection Step

Figure 25: Deployment Environment Selection Step

Figure 26: Add Security Level

Figure 27: After Deploying in All Three Formats

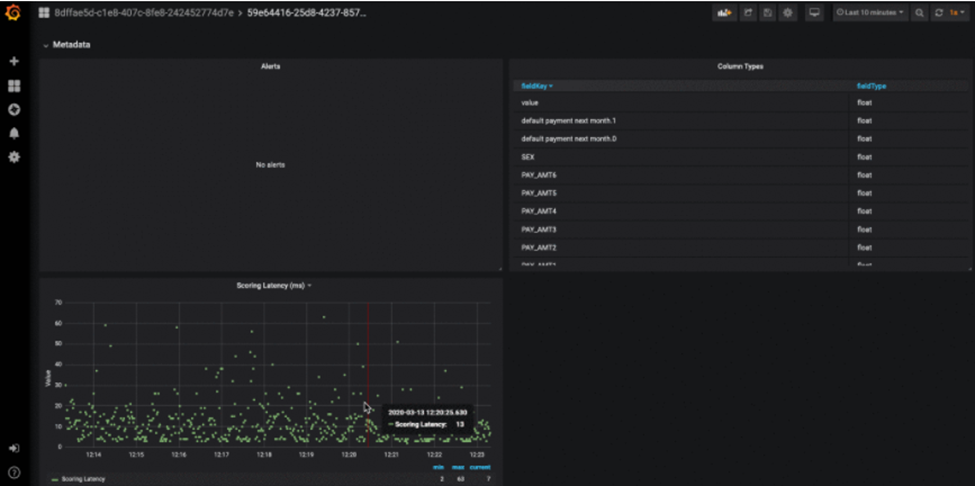

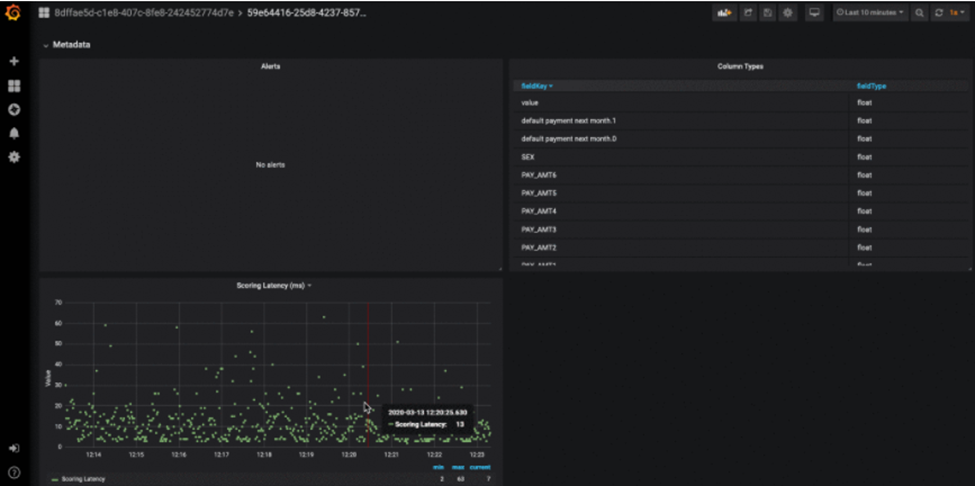

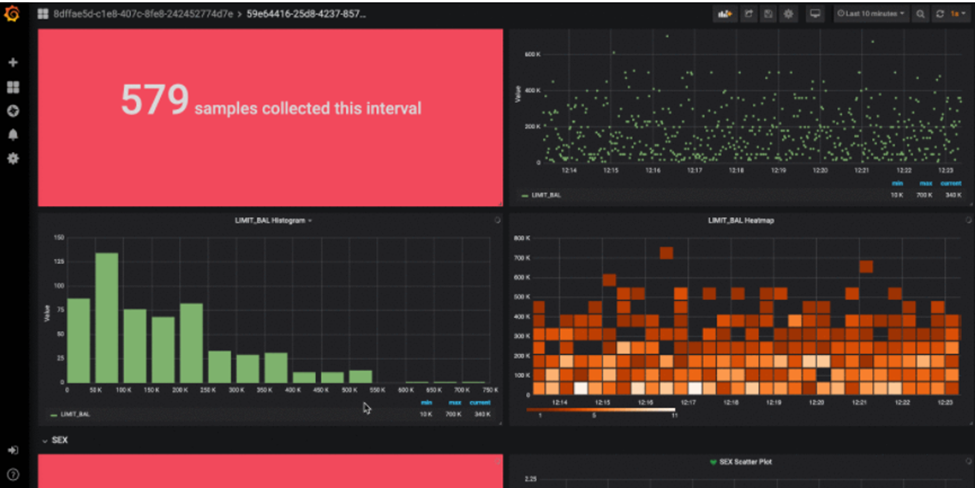

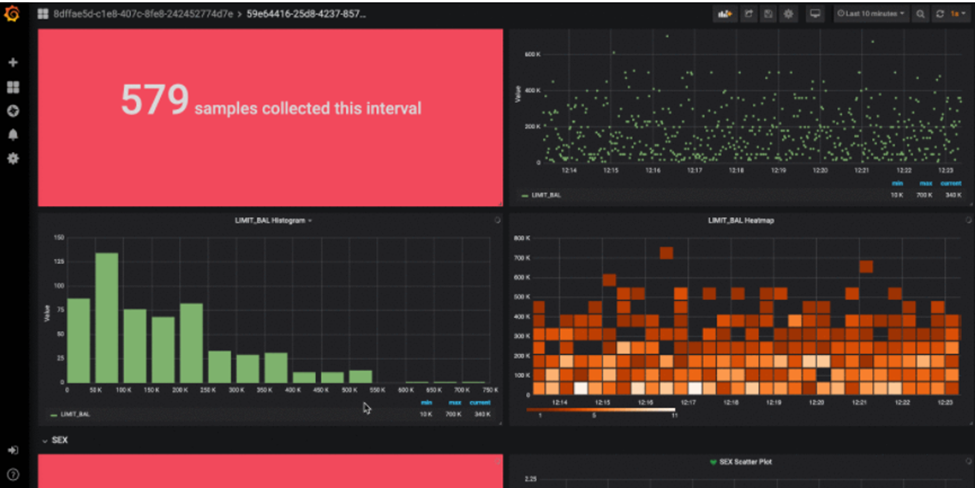

7. After the deployment, we can continuously monitor the performance of the model with alerts and warnings with a dashboard. I have added sample output collected from the H2O MLOps documentation.

Figure 28: Model Monitoring Step

Figure 29: Model Monitoring Step

Conclusion

Rather than using different platforms when working on machine learning projects, it is better to have a single platform that helps convert the idea and data into a usable end product. If you are a beginner, see a free demo today. Surely you will absorb more knowledge with hands-on experience.