Operationalizing models is critical for companies to get a return on their machine learning investments, but deployment is only one part of that operationalization process. With H2O.ai’s latest Snowflake Integration Application, authorized Snowflake users can easily deploy models, significantly reducing deployment timelines and enabling a level of self-service that creates faster time to value.

H2O.ai provides a comprehensive suite of capabilities surrounding machine learning operations that support data scientists and machine learning engineers in the deployment, management and monitoring of their models in production.

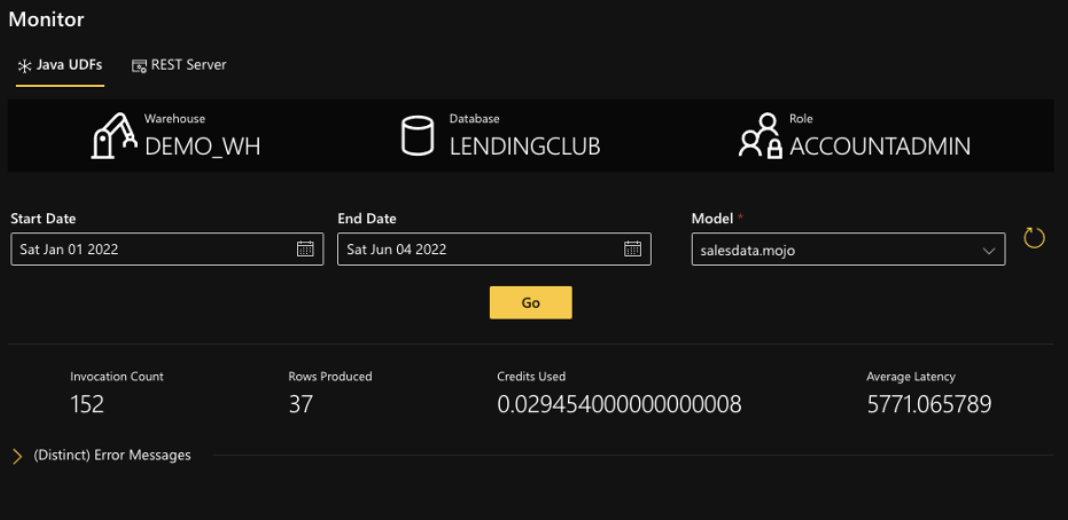

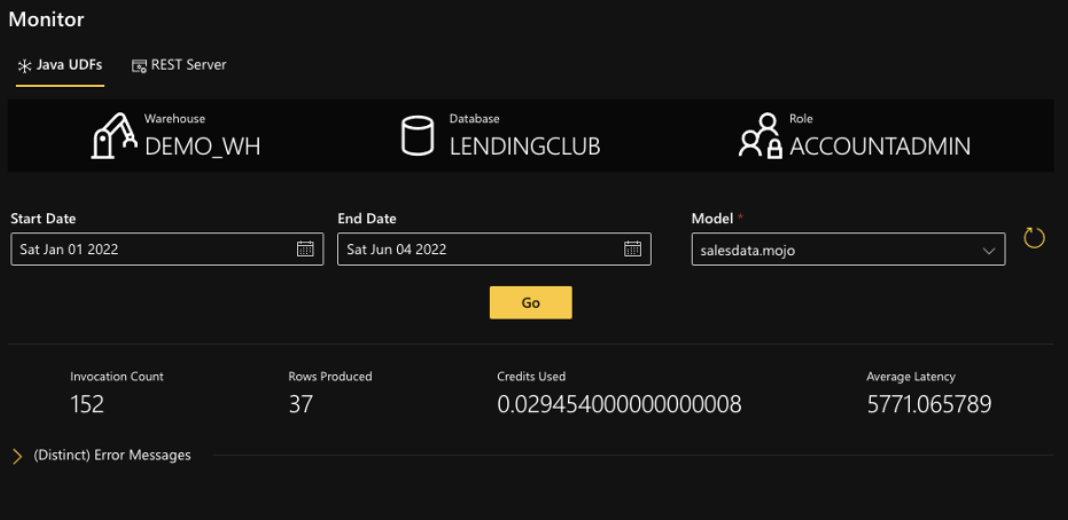

It is critical to manage and monitor deployed machine learning models to ensure models are operating as intended. Metrics detailing model execution can be collected and made available to Snowflake users.

Using Snowflake’s new Event Tables feature, models that are deployed as Java UDF’s can now have these metrics collected and reported within the Snowflake Integration Application. This added capability allows data scientists to deploy and verify the operations of their models within the Snowflake environment.

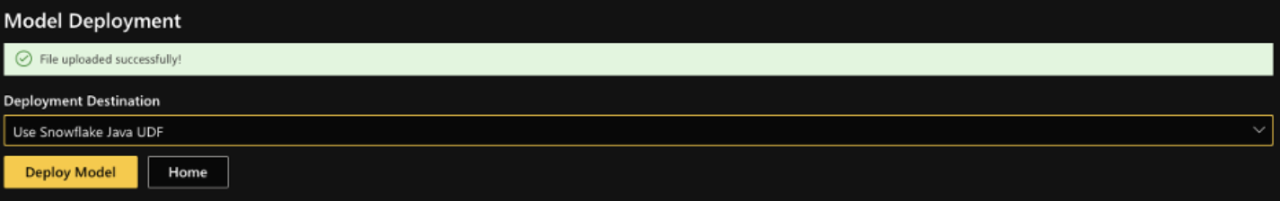

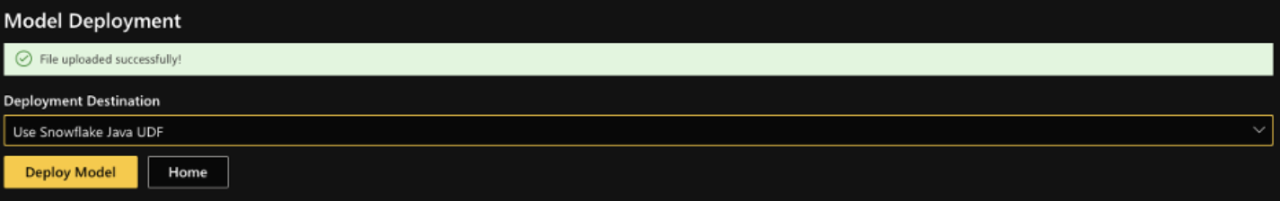

This ability enables data scientists to deploy machine learning models into the Snowflake environment for testing and scoring the data within the UI. They can also verify the results and operational metrics before promoting models to production. The production team can use the same UI to deploy the model into the Snowflake environment.

The integration uses the Snowflake Java User Defined Function to enable execution of models within the Snowflake environment. This moves machine learning models to where the data lives, in the Snowflake Data Cloud, to provide faster scoring latency performance. The UDF scales with the warehouse as the data size grows.

The new Event Table support allows key runtime metrics to be written back to Snowflake. Those events can be then viewed using the UI to achieve operational visibility with near zero impact to the execution profiles of the models.

These new features make it easy for organizations to operationalize models and combine the values delivered by the H2O AI Cloud and Snowflake’s Data Cloud.

See a demo of the Snowflake Integration Application today!