Tackling Illegal, Unreported, and Unregulated (IUU) Fishing with AI

According to a report by the High-Level Panel for a Sustainable Ocean Economy, it is estimated that illegal, unreported, and unregulated (IUU) fishing accounts for 20 percent of the seafood and up to 50 percent in some areas. These activities not only affect the marine ecosystem but, in a way, are linked to climate change on the planet as a whole. Not to mention, the annual global losses due to such activities run into millions of dollars.

The Challenge – Maritime Object Detection and Classification

In order to address this issue, a competition by the name of xView3 was organised last year. The aim of the competition was to detect illegal fishing ships, also known as dark vessels , using computer vision and global Synthetic Aperture Radar (SAR) satellite imagery.

More specifically, the solution needed to meet the following requirements:

- Identify the maritime objects in each scene

- For each object, estimate its length, and classify it as vessel or non-vessel

- For each vessel, classify it as fishing or non-fishing

Detecting and Classifying Objects from Satellite Images

“xView is a series of international computer vision competitions run by the Defense Innovation Unit and Global Fishing Watch to advance, benchmark, and procure state-of-the-art computational solutions in domains relevant to national security. We have partnered with Department of Defense organizations, federal, state, and local first responders, and non-governmental organizations to create and release big, high-quality, open datasets aligned to specific prediction tasks that are relevant to national security and the world at large.” – xView3

The Team

As part of our mission, H2O.ai believes in giving back to the community, and AI for good lies at the core of what we do. We want to utilize our knowledge, tools, and expertise to help fight the dangers which affect our ecosystem. In this regard, two of our Kaggle Grandmasters , Ryan Chesler and Guanshuo Xu, participated in this competition and secured a position in the top ten. We had a little chat with them to find out more about their background, their motivation, and their key takeaways from this competition.

Both Ryan and Guanshuo have a particular affinity for machine learning competitions. Guanshuo is the former number one in the Kaggle competitions category. Ryan finds working with satellite imagery fascinating due to the enormous wealth of data and its broad applicability. He even won a silver medal in a Kaggle competition that involved classifying and segmenting clouds from satellite images . The same goes for Guanshuo. According to him, a large amount of SAR images, the object detection-related topic, and the challenge brought by the missing labels in the training set made this competition worth attempting.

The Methodology

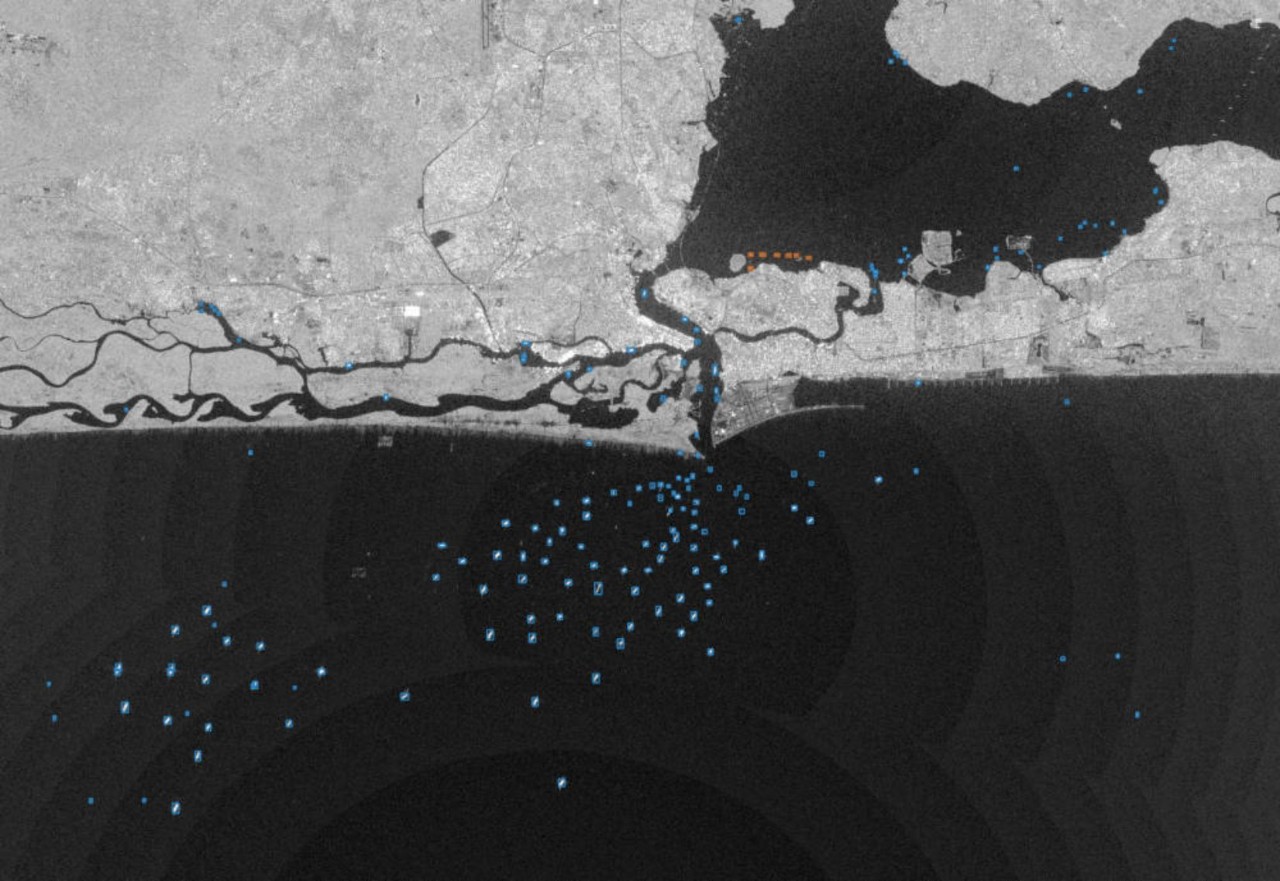

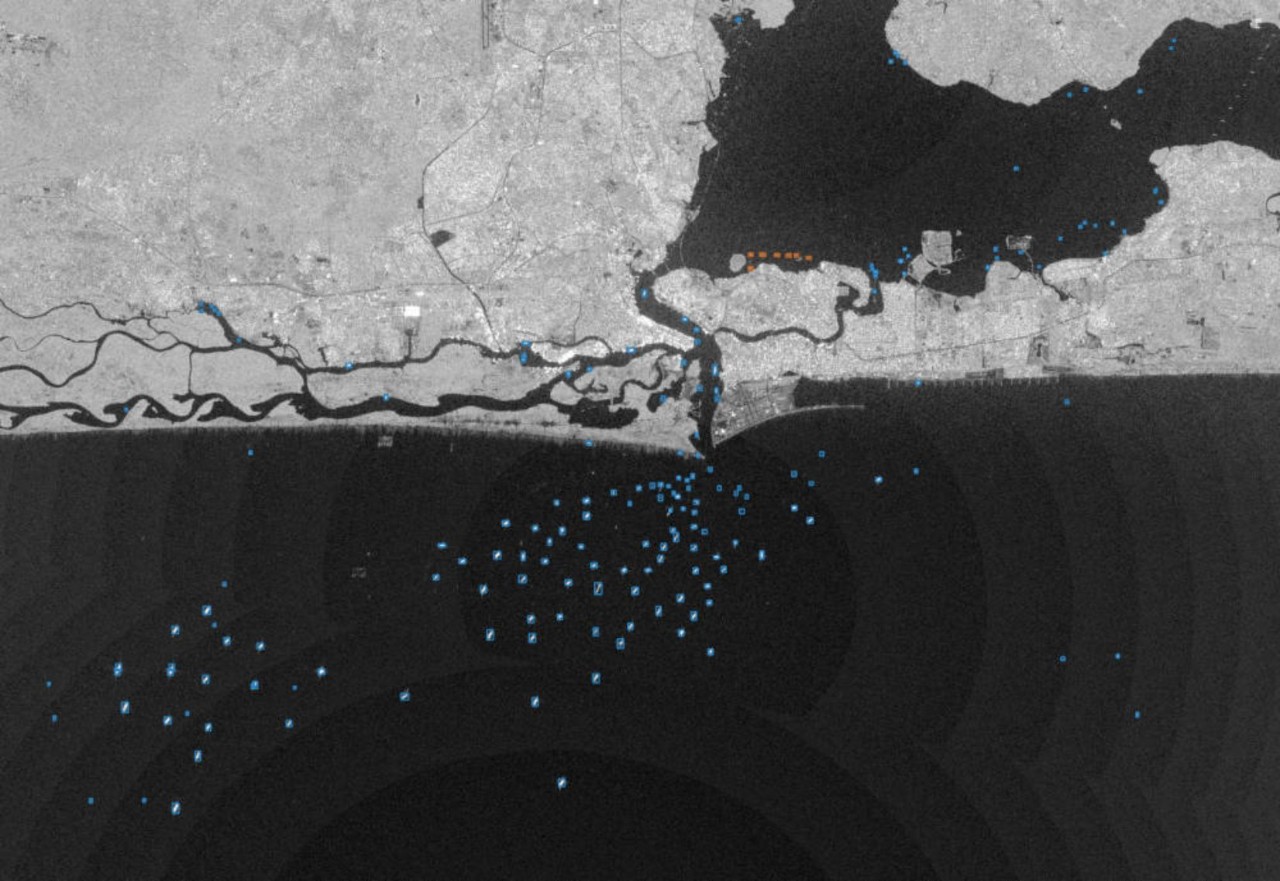

Let’s talk about the methodology. Their primary approach was first to prepare the data into smaller sub-images from the high-resolution images (roughly 30,000 x 30,000 pixels) that represent multiple kilometers of the globe.

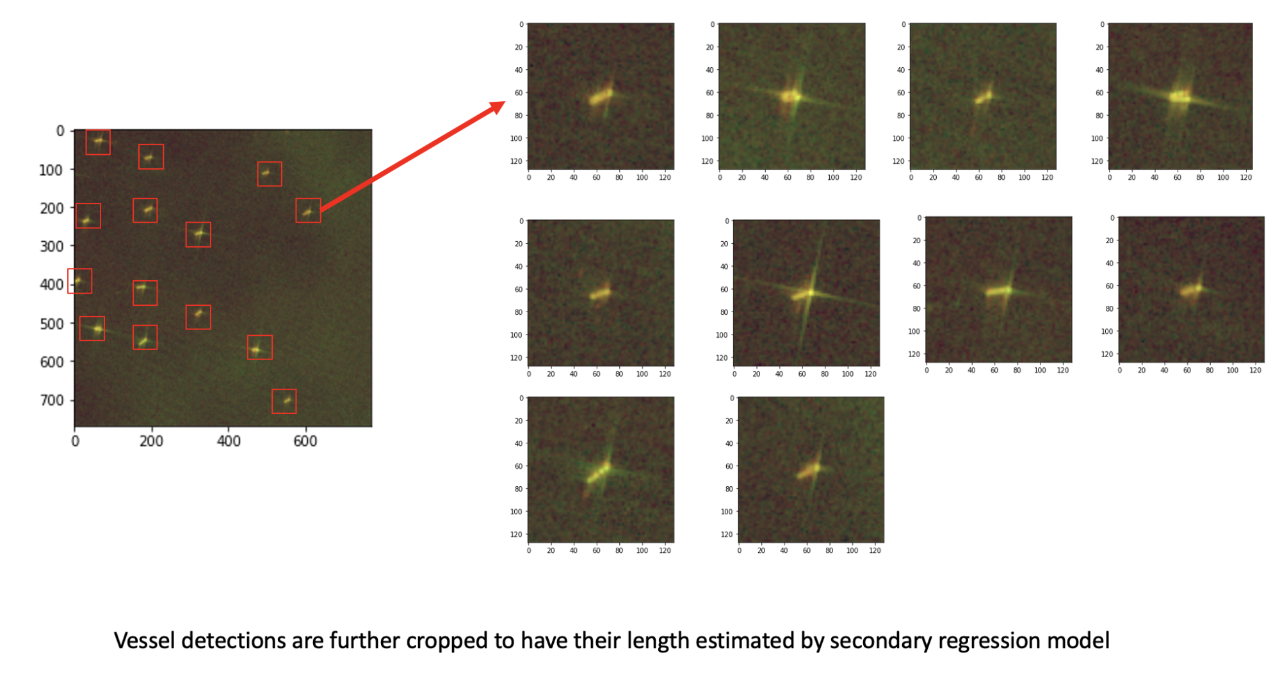

Example Satellite Images

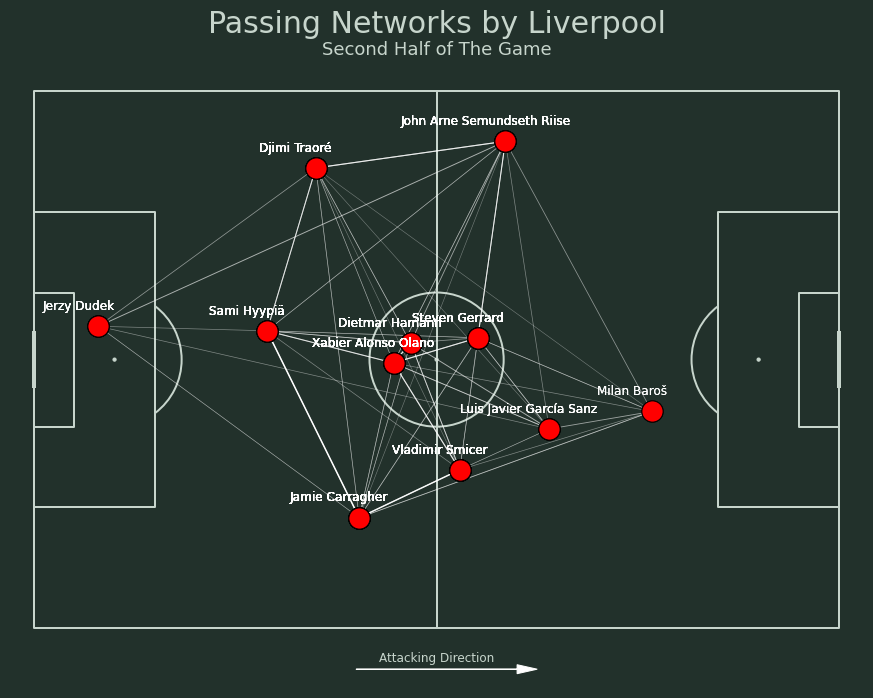

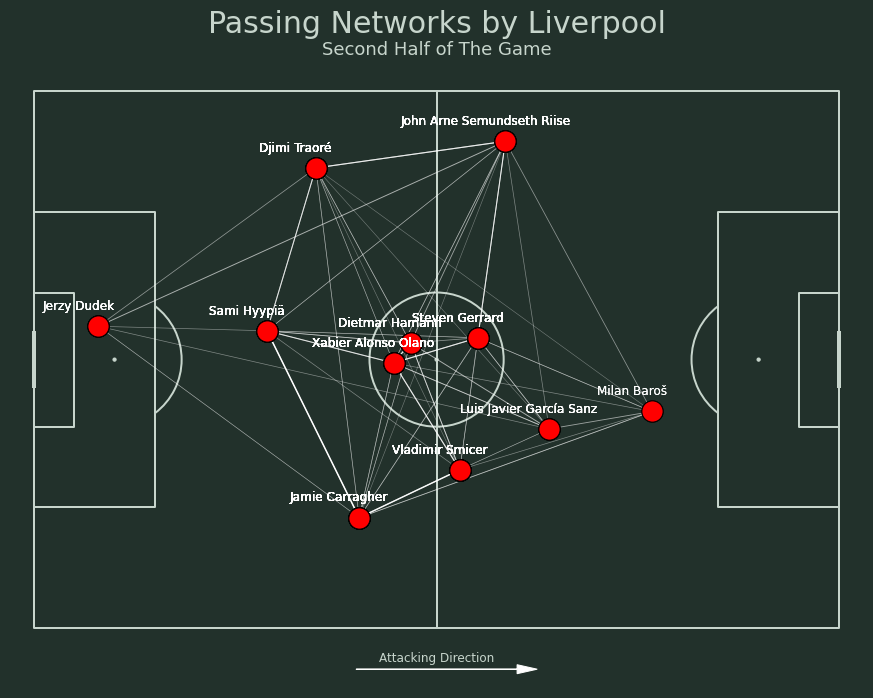

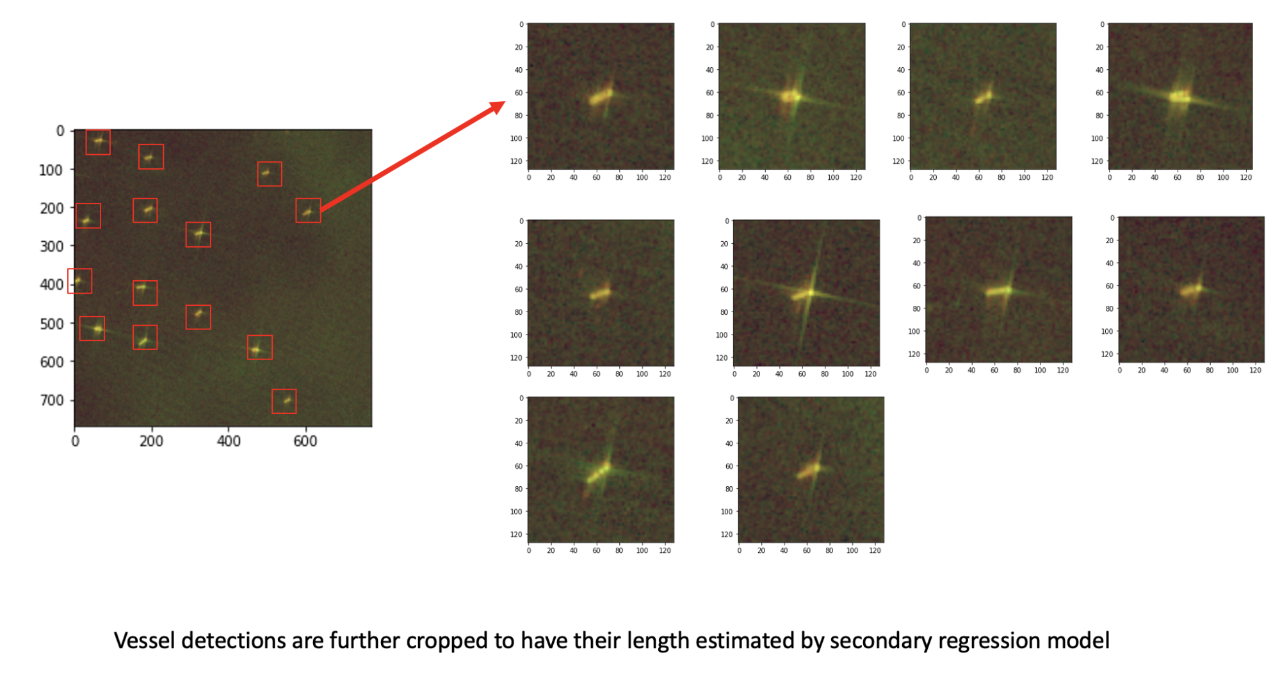

They then trained YOLOv5 (You Only Look Once) as an object detection system to locate and classify the ships. When a ship was detected from the YOLOv5 system, they passed that small region to a secondary Convolutional Neural Network (CNN) model for object length estimation. The secondary CNN model was needed as the YOLOv5 model was not able to do the length estimation easily on its own.

YOLOv5 for Object Detection (Left) followed by CNN for Length Estimation (Right)

In addition to this, there was an interesting pattern in the dataset that they observed. While the training set had a large number of missing labels, the validation set had no such problem. Therefore they decided to use the training set for the YOLOv5 model for pretraining and the validation set for finetuning. This simple two-stage training significantly mitigated the labeling issue. Finally, for inference, they only used a single YOLO model for vessel localization and classification . The inference took less than 30 seconds to process a whole SAR image.

The Key Takeaways

There was also a fair share of learning for both of them. For Ryan, the biggest takeaway was the fact that the object detection models perform phenomenally well for a task like this. He adds, “one quirk about this data was that we were given individual coordinates of the ships rather than bounding boxes, so it wasn’t immediately obvious that it was an object detection problem, but we assigned fixed-size boxes to these points, and it seemed to do really well.” Guanshuo further adds, “I’m also surprised that object detection models work perfectly out-of-the-box even on this a little bit non-standard object detection problem.”

Competitions like xView give the vast data science community a chance to apply their machine learning skills to regulate dangerous problems like illegal fishing. Both Ryan and Guanshuo demonstrated how efficient use of AI technologies could help and provide a helping hand to the various authorities involved in securing our waters and, in turn, our ecosystem.

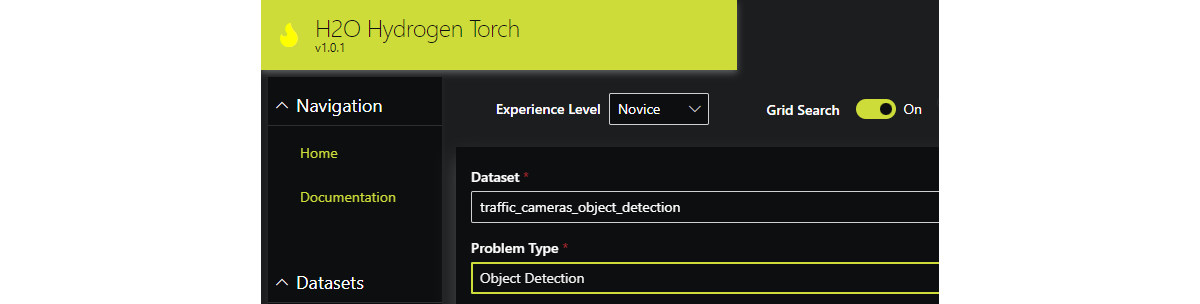

Democratizing Deep Learning with H2O Hydrogen Torch

Complex deep learning tasks such as object detection can be tricky for beginners. In order to lower the entry barriers, we developed H2O Hydrogen Torch – a no-code framework for training and deploying state-of-the-art deep learning models for various problems including object detection. H2O Hydrogen Torch is available on H2O AI Cloud so you can try this no-code object detection right now. Request a demo today.

Train state-of-the-art deep neural networks on a large set of diverse problem types

Try no-code object detection using our example datasets or your own datasets